User Tools

Sidebar

This is an old revision of the document!

Table of Contents

Moving data to and from the cluster

Please read the section Storage before reading this section.

When moving data to the shared folders, please follow these common sense rules:

- Create folders for everything you want to share.

- If the data has been produced by you, is nice to create a folder with your username and place everything in it.

- If the data belongs to some specific experiment, dataset or the like, create a folder name that is consistent with that and that is easy for everybody to understand what that is about.

- Don't overdo. Only copy data you/your colleagues need. This is a shared facility.

- Don't remove other user's files unless you advice them and they're ok with it. This is a shared facility.

- Don't expect contents of any

scratchfolder to be always there. At the moment, however, there is no deletion policy for that.

Moving data for users of Mathematical Physics and generic Lunarc users

Users of Mathematical Physics, as well as any other Lunarc user, can use their favorite tool to download and upload either from your own workstation, the Aurora front-end or the Aurora computing nodes. You can read about some of those tools on the Move data to and from the Iridium Cluster pages.

Moving data for users of Nuclear, Theoretical and Particle Physics

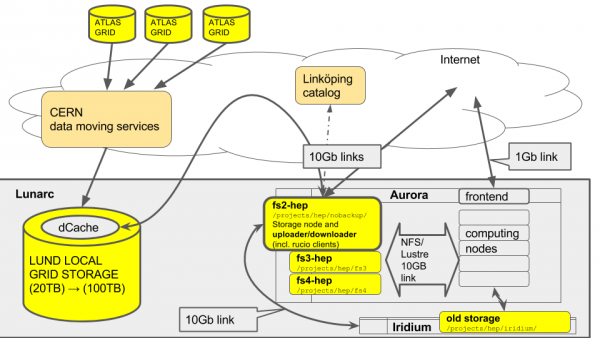

Users of these division can access the special node fs2-hep to be used for downloads or uploads.

These users (in particular Particle and Theorerical Physics) might need to download huge amount of data and therefore it was our objective to offload the Lunarc internal network and the usage of computing nodes as mere downloader nodes.

fs2-hep has a direct very fast connection to the internet for downloads. However, incoming connections are rejected, so one should take into account that this node can download and upload but cannot be used as a source to retrieve data from OUTSIDE Lunarc. More info below.

An overview of the upload/download components are shown in the slide below:

source: https://docs.google.com/presentation/d/1agBLlMrMe3Pu1RGou5ut5LE0dgzeXFGztKu4Gjn_QBE/edit?usp=sharing

source: https://docs.google.com/presentation/d/1agBLlMrMe3Pu1RGou5ut5LE0dgzeXFGztKu4Gjn_QBE/edit?usp=sharing

Using the downloader node

- Login to aurora.lunarc.lu.se

- Login to

fs2-hep:ssh fs2-hep - Choose one of the upload/download methods below.

- The download destination MUST be one of the

/project/hep/folders or your home folder. All other folders are not writable by your user. Everything in/tmpwill be deleted regularly.

Uploading/Downloading data to/from an external source to Aurora

- Use your favourite download software. Some suggestions are available at Moving data to and from Iridium

- Use your home folder one of the

/project/hepfolders as a destination folder. Any other path is not writable by your user. The/tmpfolder will be deleted regularly so you should not use that.

Uploading/Downloading data to/from Aurora from your laptop or workstation

You should avoid doing this. Aurora is not a storage facility, therefore is not meant to be accessed by external sources to do data movement. It is possible to do that through Aurora frontend but this is extremely slow and will slow down your colleagues work. Also, Aurora frontend managers might interrupt your transfers if they see it is taking too much time. I strongly recommend to follow the instructions at Uploading/Downloading data to/from an external source to Aurora above instead, and eventually run an ssh/ftp server on your own laptop or workstation, or ask the sysadmin for a convenient form of online storage.

For resources that can be stored on the GRID, you should definitely stage them on the Lund GRID storage instead, a few ways described under Using GRID tools, so that you can access them from all over the world in the fastest way possible.

Downloading/Uploading data to/from the GRID to Aurora

The recommended mode to move data is using the special fs2-hep storage node.

The SLURM method described below is deprecated, to be used only if the other method doesn't work.

Using GRID tools on ''fs2-hep'' (recommended)

From the GRID point of view, our local GRID storage is a storage element and from ATLAS information system it has a special name, SE-SNIC-T2_LUND_LOCALGROUPDISK . I will use this name in the documentation below. Such storage can be accessed in many ways and protocols, or endpoints, depending on what task you need to carry on. More details about this below. If you already know how datatransfer works in ATLAS, just follow this quick reference card by Oxana:

Otherwise read below.

Prerequisites:

The following has to be done in the order it is presented.

- Get a CERN account. Ask your senior team members.

- Get a GRID Certificate. Three options (one of the three is enough)

- If you belong to Lund University, please follow the instructions at this page at the LDC website ( Swedish only :( )

- Check with your home university

- Check CERN certificate service: https://ca.cern.ch/ca/

- Ask for membership to the ATLAS VOMS server: https://lcg-voms2.cern.ch

IMPORTANT

IMPORTANT  Once your certificate is accepted, check the nickname on your personal voms page.

Once your certificate is accepted, check the nickname on your personal voms page.

This nickname will be used to access Rucio. It is usually the same username as your CERN account login.

- Identify the names of the datasets you want to handle (usually the senior person in your research group knows)

Setting up the GRID tools

One should interact with the storage using Rucio.

- Setup the ATLAS environment using

cvmfsas explained in cvmfs - Run:

lsetup rucio

- If asked about the RUCIO_ACCOUNT, make sure to change it to the nickname in the VOMS page in the Prerequisites step.

- Follow the onscreen instructions generate a valid atlas GRID proxy

- Read the Rucio HowTo, it is hosted by CERN here (only accessible using your CERN account): https://twiki.cern.ch/twiki/bin/viewauth/AtlasComputing/RucioClientsHowTo and play with rucio commands.

- General quick documentation on how to access SE-SNIC-T2_LUND_LOCALGROUPDISK and special endpoint names are explained here: http://www.hep.lu.se/grid/localgroupdisk.html

Downloading data from the grid to SE-SNIC-T2_LUND_LOCALGROUPDISK

This task is about moving the data within GRID storage element. To move a dataset to SE-SNIC-T2_LUND_LOCALGROUPDISK one needs to perform a data transfer request ![]() WIP

WIP

![]()

Downloading data from SE-SNIC-T2_LUND_LOCALGROUPDISK to Aurora

- Select a dataset to download:

rucio list-datasets-rse SE-SNIC-T2_LUND_LOCALGROUPDISK | less

- Select a folder in fs3-hep or fs4-hep as a destination target to download, for example

/projects/hep/fs4/scratch/<yourusername>/test

| machine | folder path | purpose |

|---|---|---|

fs3-hep | /projects/hep/fs3/shared/ | long term storage of datasets |

fs4-hep | /projects/hep/fs4/scratch/ | short term storage of datasets |

- run a Rucio command to perform the transfer:

rucio download --dir <destinationdir> --rse SE-SNIC-T2_LUND_LOCALGROUPDISK <dataset_name>

- Example:

rucio download --dir /projects/hep/fs4/scratch/pflorido/test/ --rse SE-SNIC-T2_LUND_LOCALGROUPDISK mc14_13TeV:mc14_13TeV.147916.Pythia8_AU2CT10_jetjet_JZ6W.merge.DAOD_EXOT2.e2743_s1982_s2008_r5787_r5853_p1807_tid04497456_00

- Result:

[pflorido@fs2-hep ~]$ rucio download --dir /projects/hep/fs4/scratch/pflorido/test/ --rse SE-SNIC-T2_LUND_LOCALGROUPDISK mc14_13TeV:mc14_13TeV.147916.Pythia8_AU2CT10_jetjet_JZ6W.merge.DAOD_EXOT2.e2743_s1982_s2008_r5787_r5853_p1807_tid04497456_00 2017-07-31 15:55:06,158 INFO Starting download for mc14_13TeV:mc14_13TeV.147916.Pythia8_AU2CT10_jetjet_JZ6W.merge.DAOD_EXOT2.e2743_s1982_s2008_r5787_r5853_p1807_tid04497456_00 with 8 files 2017-07-31 15:55:06,161 INFO Thread 1/3 : Starting the download of mc14_13TeV:DAOD_EXOT2.04497456._000001.pool.root.1 2017-07-31 15:55:06,162 INFO Thread 2/3 : Starting the download of mc14_13TeV:DAOD_EXOT2.04497456._000002.pool.root.1 2017-07-31 15:55:06,163 INFO Thread 3/3 : Starting the download of mc14_13TeV:DAOD_EXOT2.04497456._000003.pool.root.1 2017-07-31 15:55:06,334 INFO Thread 3/3 : File mc14_13TeV:DAOD_EXOT2.04497456._000003.pool.root.1 trying from SE-SNIC-T2_LUND_LOCALGROUPDISK 2017-07-31 15:55:06,336 INFO Thread 2/3 : File mc14_13TeV:DAOD_EXOT2.04497456._000002.pool.root.1 trying from SE-SNIC-T2_LUND_LOCALGROUPDISK 2017-07-31 15:55:06,442 INFO Thread 1/3 : File mc14_13TeV:DAOD_EXOT2.04497456._000001.pool.root.1 trying from SE-SNIC-T2_LUND_LOCALGROUPDISK 2017-07-31 15:56:08,658 INFO Thread 1/3 : File mc14_13TeV:DAOD_EXOT2.04497456._000001.pool.root.1 successfully downloaded from SE-SNIC-T2_LUND_LOCALGROUPDISK 2017-07-31 15:56:08,816 INFO Thread 1/3 : File mc14_13TeV:DAOD_EXOT2.04497456._000001.pool.root.1 successfully downloaded. 3.278 GB in 62.65 seconds = 52.32 MBps 2017-07-31 15:56:08,817 INFO Thread 1/3 : Starting the download of mc14_13TeV:DAOD_EXOT2.04497456._000004.pool.root.1 2017-07-31 15:56:08,817 INFO Thread 1/3 : File mc14_13TeV:DAOD_EXOT2.04497456._000004.pool.root.1 trying from SE-SNIC-T2_LUND_LOCALGROUPDISK 2017-07-31 15:56:25,452 INFO Thread 3/3 : File mc14_13TeV:DAOD_EXOT2.04497456._000003.pool.root.1 successfully downloaded from SE-SNIC-T2_LUND_LOCALGROUPDISK 2017-07-31 15:56:25,570 INFO Thread 3/3 : File mc14_13TeV:DAOD_EXOT2.04497456._000003.pool.root.1 successfully downloaded. 5.598 GB in 79.41 seconds = 70.49 MBps 2017-07-31 15:56:25,570 INFO Thread 3/3 : Starting the download of mc14_13TeV:DAOD_EXOT2.04497456._000005.pool.root.1 2017-07-31 15:56:25,571 INFO Thread 3/3 : File mc14_13TeV:DAOD_EXOT2.04497456._000005.pool.root.1 trying from SE-SNIC-T2_LUND_LOCALGROUPDISK 2017-07-31 15:56:35,012 INFO Thread 2/3 : File mc14_13TeV:DAOD_EXOT2.04497456._000002.pool.root.1 successfully downloaded from SE-SNIC-T2_LUND_LOCALGROUPDISK 2017-07-31 15:56:35,185 INFO Thread 2/3 : File mc14_13TeV:DAOD_EXOT2.04497456._000002.pool.root.1 successfully downloaded. 5.480 GB in 89.02 seconds = 61.56 MBps 2017-07-31 15:56:35,185 INFO Thread 2/3 : Starting the download of mc14_13TeV:DAOD_EXOT2.04497456._000006.pool.root.1 2017-07-31 15:56:35,186 INFO Thread 2/3 : File mc14_13TeV:DAOD_EXOT2.04497456._000006.pool.root.1 trying from SE-SNIC-T2_LUND_LOCALGROUPDISK 2017-07-31 15:57:06,869 INFO Thread 1/3 : File mc14_13TeV:DAOD_EXOT2.04497456._000004.pool.root.1 successfully downloaded from SE-SNIC-T2_LUND_LOCALGROUPDISK 2017-07-31 15:57:07,003 INFO Thread 1/3 : File mc14_13TeV:DAOD_EXOT2.04497456._000004.pool.root.1 successfully downloaded. 5.616 GB in 58.19 seconds = 96.51 MBps 2017-07-31 15:57:07,004 INFO Thread 1/3 : Starting the download of mc14_13TeV:DAOD_EXOT2.04497456._000007.pool.root.1 2017-07-31 15:57:07,004 INFO Thread 1/3 : File mc14_13TeV:DAOD_EXOT2.04497456._000007.pool.root.1 trying from SE-SNIC-T2_LUND_LOCALGROUPDISK 2017-07-31 15:57:32,424 INFO Thread 1/3 : File mc14_13TeV:DAOD_EXOT2.04497456._000007.pool.root.1 successfully downloaded from SE-SNIC-T2_LUND_LOCALGROUPDISK 2017-07-31 15:57:32,602 INFO Thread 1/3 : File mc14_13TeV:DAOD_EXOT2.04497456._000007.pool.root.1 successfully downloaded. 2.006 GB in 25.6 seconds = 78.35 MBps 2017-07-31 15:57:32,603 INFO Thread 1/3 : Starting the download of mc14_13TeV:DAOD_EXOT2.04497456._000008.pool.root.1 2017-07-31 15:57:32,603 INFO Thread 1/3 : File mc14_13TeV:DAOD_EXOT2.04497456._000008.pool.root.1 trying from SE-SNIC-T2_LUND_LOCALGROUPDISK 2017-07-31 15:57:34,080 INFO Thread 3/3 : File mc14_13TeV:DAOD_EXOT2.04497456._000005.pool.root.1 successfully downloaded from SE-SNIC-T2_LUND_LOCALGROUPDISK 2017-07-31 15:57:34,206 INFO Thread 3/3 : File mc14_13TeV:DAOD_EXOT2.04497456._000005.pool.root.1 successfully downloaded. 5.681 GB in 68.64 seconds = 82.76 MBps 2017-07-31 15:57:36,718 INFO Thread 2/3 : File mc14_13TeV:DAOD_EXOT2.04497456._000006.pool.root.1 successfully downloaded from SE-SNIC-T2_LUND_LOCALGROUPDISK 2017-07-31 15:57:36,848 INFO Thread 2/3 : File mc14_13TeV:DAOD_EXOT2.04497456._000006.pool.root.1 successfully downloaded. 5.524 GB in 61.66 seconds = 89.59 MBps 2017-07-31 15:57:37,496 INFO Thread 1/3 : File mc14_13TeV:DAOD_EXOT2.04497456._000008.pool.root.1 successfully downloaded from SE-SNIC-T2_LUND_LOCALGROUPDISK 2017-07-31 15:57:37,613 INFO Thread 1/3 : File mc14_13TeV:DAOD_EXOT2.04497456._000008.pool.root.1 successfully downloaded. 352.563 MB in 5.01 seconds = 70.37 MBps ---------------------------------- Download summary ---------------------------------------- DID mc14_13TeV:mc14_13TeV.147916.Pythia8_AU2CT10_jetjet_JZ6W.merge.DAOD_EXOT2.e2743_s1982_s2008_r5787_r5853_p1807_tid04497456_00 Total files : 8 Downloaded files : 8 Files already found locally : 0 Files that cannot be downloaded : 0

Downloading data from any ATLAS SE to Aurora

If you omit the –rse parameter in any of the commands above, rucio will automatically search for the best Storage Element. However the speed and duration of such download is not guaranteed. Please ask the collaboration for their policies regarding such transfers.

Uploading data from Aurora to SE-SNIC-T2_LUND_LOCALGROUPDISK

Before uploading files, it is very important to understand the ATLAS data model. Please read https://twiki.cern.ch/twiki/bin/viewauth/AtlasComputing/RucioClientsHowTo#Creating_data .

It is recommended to use the user scope unless you're uploading data to be shared with the official collaboration. In that case follow the instructions at the link above.

rucio list-scopes | grep <your_username>

[pflorido@fs2-hep ~]$ rucio list-scopes | grep fpaganel user.fpaganel

Remember that dataset names uploaded to rucio are universally unique and cannot be changed in the future, so choose with extreme care.

The generic command for creating a dataset is as follows:

rucio upload --rse SE-SNIC-T2_LUND_LOCALGROUPDISK user.<username>:<dataset_name> [file1 [file2...]] [/directory]

Where file1, file2, directory are files that will be uploaded inside the dataset called '<dataset name>'

Example:

- user scope called

user.fpaganel - dataset called

floridotests - files named

ruciotests/downloadfromAU209.txt ruciotests/downloadfromfs2-hep.txt

[pflorido@fs2-hep ~]$ rucio upload --rse SE-SNIC-T2_LUND_LOCALGROUPDISK user.fpaganel:floridotests ruciotests/downloadfromAU209.txt ruciotests/downloadfromfs2-hep.txt 2017-07-31 17:21:50,841 WARNING user.fpaganel:floridotests cannot be distinguished from scope:datasetname. Skipping it. 2017-07-31 17:21:51,469 INFO Dataset successfully created 2017-07-31 17:21:51,632 INFO Adding replicas in Rucio catalog 2017-07-31 17:21:51,726 INFO Replicas successfully added 2017-07-31 17:21:52,872 INFO File user.fpaganel:downloadfromAU209.txt successfully uploaded on the storage 2017-07-31 17:21:53,174 INFO Adding replicas in Rucio catalog 2017-07-31 17:21:53,830 INFO Replicas successfully added 2017-07-31 17:21:55,334 INFO File user.fpaganel:downloadfromfs2-hep.txt successfully uploaded on the storage 2017-07-31 17:21:57,444 INFO Will update the file replicas states 2017-07-31 17:21:57,531 INFO File replicas states successfully updated

Your files are now in the rucio catalog:

[pflorido@fs2-hep ~]$ rucio list-dids user.fpaganel:* +----------------------------------------------------+--------------+ | SCOPE:NAME | [DID TYPE] | |----------------------------------------------------+--------------| | user.fpaganel:user.fpaganel.test.0004 | CONTAINER | | user.fpaganel:floridotests | DATASET | | user.fpaganel:user.fpaganel.test.0004.140516165935 | DATASET | +----------------------------------------------------+--------------+

Uploading data from Aurora to any other GRID Storage Element

Please read carefully https://twiki.cern.ch/twiki/bin/viewauth/AtlasComputing/RucioClientsHowTo#Creating_data

Using SLURM Scripts (inefficient, deprecated)

This was a workaround when there was no data mover frontend (fs2-hep). The reason why this technique is discontinued is because it uses the 1GB link of the node to perform the download, while fs2-hep has a dedicated 10GB link just to do the transfers.

Particle Physicists were submitting grid downloads using the SLURM batch system with a script created by Will Kalderon. He kindly provided a link to the scripts:

https://gitlab.cern.ch/lund/useful-scripts/tree/master/downloading

It is mainly intended for Particle Physicist and if you need to access the script you should request clearance to Will. If you see a 404 not found error when clicking the link it means you have no clearance.